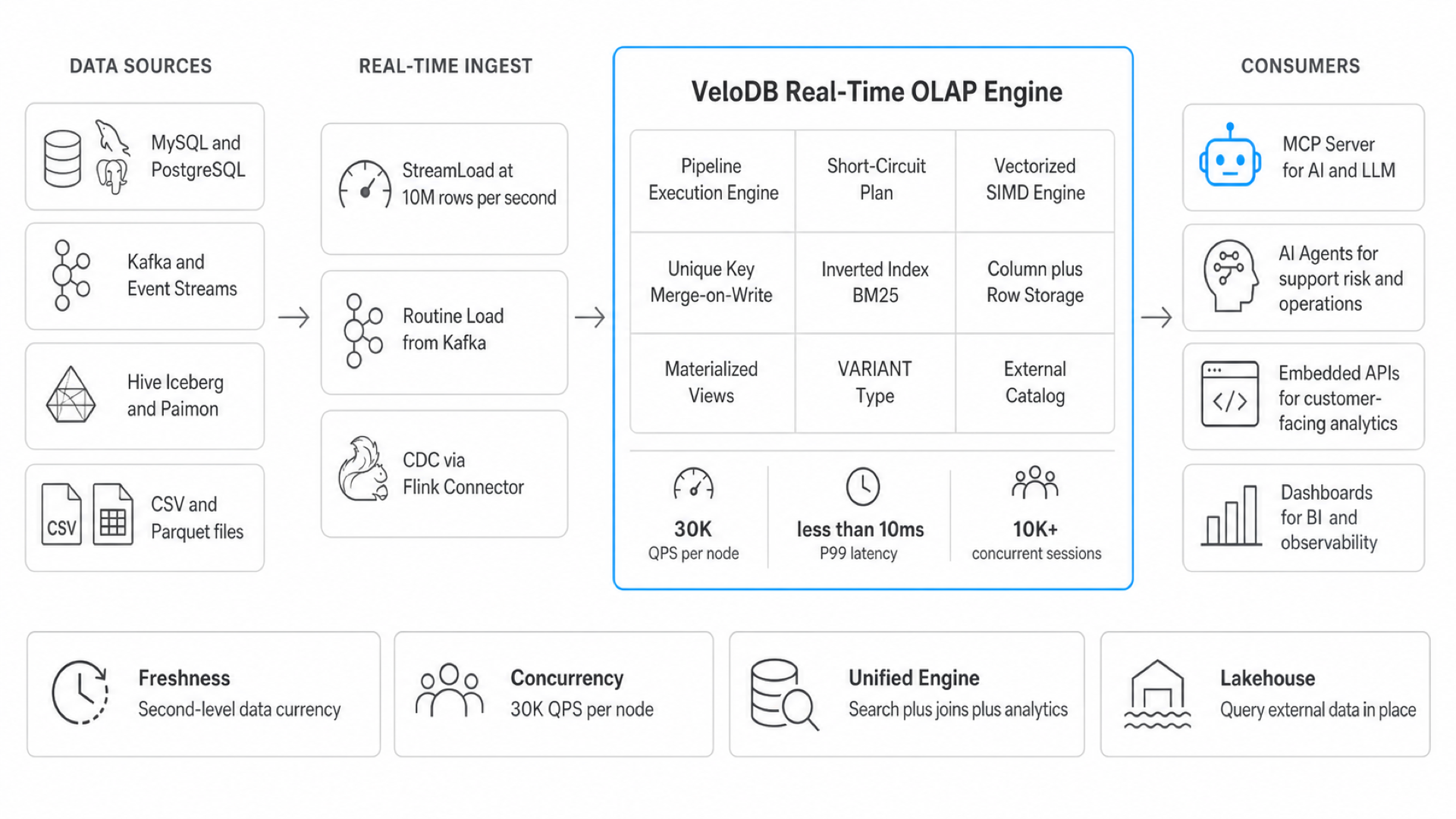

Millisecond OLAP at 30,000 queries per second.

High-concurrency, low-latency real-time analytics for agent-facing workloads. Fresh data, full-text search, SQL joins, and lakehouse context on one query path.

Agents turned analytics into a high-concurrency, low-latency problem.

The infrastructure that worked for dashboards doesn't work when the consumer is an agent, not a human.

When agents become the primary data consumer, every assumption about analytics infrastructure changes. The query rate multiplies by 20-50x per business question. Freshness tolerance drops from minutes to seconds. Concurrency goes from single-digit analysts to thousands of simultaneous sessions.

Batch data warehouses designed for human dashboards cannot keep pace. They charge per-query at rates that make agent workloads 30x more expensive. They update hourly when agents need data from the last few seconds.

"OpenAI's acquisition of Rockset signals that real-time analytical databases are becoming foundational infrastructure for AI applications."

— Industry analysis, 2024

A slow report is a meeting. A slow agent is a failed user interaction.

An agent reply is a product interaction. It needs current state, relational context, search, and predictable latency in the same request path. The database serving it must deliver all four without stitching together separate systems.

Agent-facing analytics

The agent workload already runs on VeloDB. It just had a different name.

Customer-facing analytics has the same workload shape: fresh data, high fan-out, low-latency analytical serving on a request path.

BYD: from 3 minutes to 5 seconds

BYD modernized ad-hoc analytics on VeloDB to support high fan-out queries across real-time telemetry. Non-primary-key filters run faster, and JSON columns occupy less storage. The platform now handles over 100 TB of data.

JD.com: 10B+ rows daily

JD.com ingests more than ten billion rows daily across 30+ backend nodes for real-time order tracking and inventory analytics at peak concurrency.

Same workload shape

Customer-facing analytics already demands fresh data, high fan-out, and predictable latency. Agent workloads inherit the same pattern.

Production-proven scale

The same engine that handles JD.com's peak traffic and BYD's manufacturing telemetry serves agent workloads without architectural changes.

Low latency and high concurrency, plus everything else agents need.

Each one addresses a specific failure mode in agent workloads running on infrastructure designed for dashboards.

High concurrency at agent scale

Agents fire 20-50 queries per business question across thousands of sessions. VeloDB turns that fan-out from a scaling problem into a solved one.

Low latency on every query shape

Point queries and complex analytics both stay under 10ms P99. Fast enough that agent interactions feel like product interactions, not database queries.

Search and joins in one system

Full-text search with BM25 scoring runs directly inside SQL. Agents combine relevance search, relational joins, and analytics in a single query — no sidecar.

Performance under rapidly changing data

Streaming ingest and CDC keep data fresh in seconds, not hours. Agents always operate on current state without sacrificing query performance.

Sources, search, joins, and serving on one query path.

VeloDB keeps agent context current without adding a cache, search sidecar, or separate point-serving database.

Ingest. Join. Search. Serve.

Every agent query runs through the same four-step path inside one engine, against one copy of the data, on one optimizer plan.

Ingest current events

StreamLoad pushes HTTP writes at 10M rows/s. Routine Load pulls continuously from Kafka. CDC captures changes from upstream databases in seconds.

Join historical context

Merge-on-Write Unique Key model keeps mutable records current. Materialized views precompute repeated joins. VARIANT stores semi-structured JSON at 1/3 the storage cost.

Search relevant records

Inverted Index with BM25 scoring runs full-text search inside SQL. No sidecar. Agents combine relevance search and relational analytics in one query.

Serve under fan-out

Pipeline Execution Engine with SIMD vectorization. Short-Circuit Plan for point queries. Row cache and prepared statements keep P99 predictable at 30K QPS.

One serving layer instead of four.

Most agent stacks combine three or four databases to cover freshness, search, joins, and concurrency. VeloDB collapses that into one.

PostgreSQL / MySQL

Real-time updates, but row-based and single-node. Analytical queries take minutes at scale. Cannot serve thousands of concurrent agent sessions.

ClickHouse / Druid

Fast OLAP scans, but weak point lookups and update-in-place. You end up bolting on a serving cache and managing CDC pipelines separately.

Pinot / DynamoDB

High concurrency serving, but no SQL joins or relevance search. You add an Elasticsearch sidecar and a separate analytics layer.

VeloDB

Streaming ingest, UPSERT, full-text search, joins, point lookups, and lakehouse federation on one query path. One system replaces three — and charges for compute, not per-query.

Go deeper on agent-facing analytics.

Technical deep-dives, customer stories, and industry analysis on why real-time OLAP is becoming the serving layer for AI agents.

How BYD cut data latency from 3 minutes to 5 seconds with Apache Doris

Real-time telemetry analytics for quality control across manufacturing lines, powered by VeloDB's Merge-on-Write engine.

JD.com: 10 billion rows per day for real-time order analytics

How one of the world's largest e-commerce platforms handles peak-concurrency real-time analytics at scale.

Rockset to OpenAI: what it means for real-time analytics and AI

Why the acquisition signals that real-time analytical databases are becoming foundational AI infrastructure.

Pipeline Execution Engine: data-driven query scheduling

How self-adjusted parallelism and pipeline-level resource management deliver predictable latency under concurrent load.

Inverted Index with BM25: full-text search inside SQL

40x text search acceleration without an Elasticsearch sidecar. How agents combine relevance search and relational joins.

Why agents need OLAP, not data warehouses

The 30x cost multiplier, the freshness gap, and the concurrency ceiling that make batch warehouses unsuitable for agent workloads.

Bring your freshness target. We will map the path.

Bring your fan-out profile, P99 budget, and the existing systems your agent reads from. We sketch the serving path and show what VeloDB collapses.